As we go through another year of staying at home, the once trendy work-from-home setup has now become a necessity. Working from home definitely has its ups and downs. However, with the right home office essentials, work at home will be as easy as pressing the button on your office microwave.

Why Is a Home Office Essential?

Having a home office is underrated. Sure, home is the last place you want to think about work. But right now, your home is the megacenter where you do everything. It’s a bakery, a gym, a movie theater, a daycare, and now your office.

All this can be very overwhelming, with everything competing with anything for your attention. Your productivity and mental health will take a hit. So, what do you do? Compartmentalize. Having a designated place for a specific task is essential, and that includes having a home office.

Say you have a vacant room or a big enough corner space; set up camp for all your work needs there. Put in your head that this space is for work and work only. Make sure that when you leave your home office, you leave work there, too. It’s a great way to strike a balance in your jack-of-all-trades household.

Also Read: 20 Best Productivity Apps to Get Things Done

The Home Office Essentials Checklist

So, what exactly do you need when setting up a home office? Here’s a checklist of the home office essentials you need so you can check productivity and work promotion off your career goals list.

Your Computer

To start your remote work, you need a computer in your home office. Your computer is the first essential on the list. Without it, how exactly are you going to work? Let’s be honest, all kinds of work now require a computer to keep the business afloat.

Like your home, your computer will be the venue for many processes and vital communications with your colleagues and clients. So, make sure to check on your computer constantly. If you don’t have a computer yet, you can check out Amazon or the Apple Store for the latest computers available.

A variety of laptops and computers are readily available now. Each can perform the most mundane and the most complex tasks you need for work.

If you want a budget-friendly laptop that packs a punch on essential specifications, the Acer Aspire 5 will be a great addition to your home office. You can choose between Intel and AMD, both robust processors that can accommodate your multi-tasking needs in your home office.

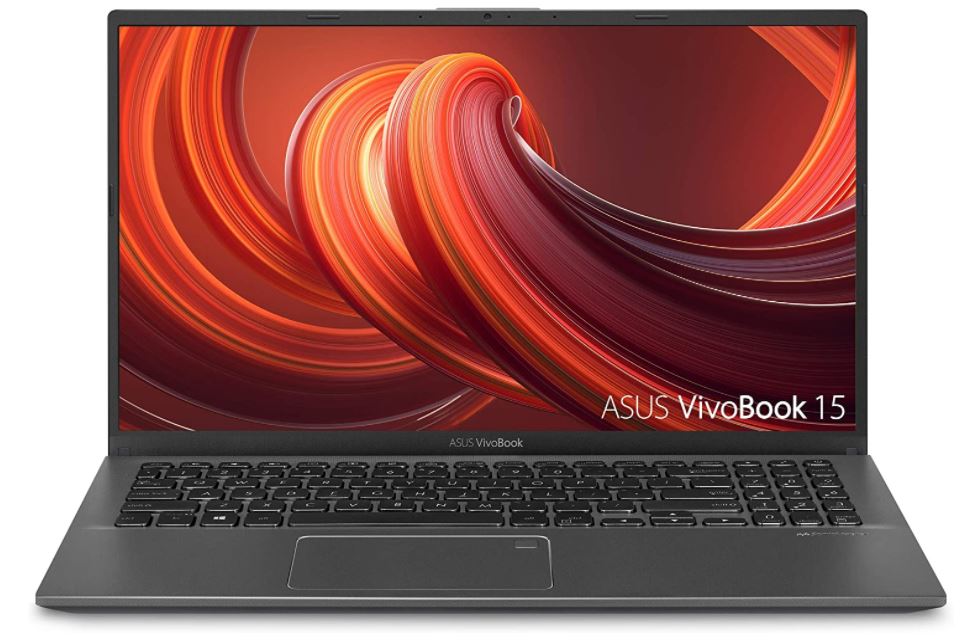

The ASUS Vivobook 15 is another great budget-friendly laptop that can give you an edge in your home office. It features an impressive 8 GB RAM and 128 GB SDD with the latest 10th generation Intel i3 CPU. This laptop can fulfill all kinds of tasks, from the simplest to the most complex.

The HP Laptop 15-dy1036nr may cost a bit more compared to the ones above, but this is a classic case of getting what you paid for. And with this laptop, paying more gets you more of the good stuff. It’s equipped with the 10th generation Intel i5 processor and 25 6GB SSD, which is more than enough to keep you on your work-from-home productive streak.

A Great Chair

Now, don’t underestimate the power of a great chair. You’ll be surprised how a simple chair can affect your overall health, especially after sitting idle for over eight hours every day. According to WebMD, sitting idle for too long can cause:

- Increased cancer risk

- Lower back pain

- Deep vein thrombosis

- Increased diabetes risk

- Anxiety spike

- Counteraction to exercise

- Weight gain

Aside from having standing breaks in between your work hours, having an ergonomic and comfortable chair can significantly help you, too.

That being said, having a great chair is definitely a home office essential. It provides lumbar support that puts you in the correct position while you work. It will be your best friend in your home office, and it’s worth investing in for the health benefits alone.

There are a lot of different ergonomic chairs available in the market now. And just like anything else, you need to do your research before buying one. The perfect chair differs for each person, so it pays to know your pain points when you sit. You have to note your preferences too, a good thing that most office chairs can be adjusted to whatever ideal setting you want.

Here are some of the great chairs on Amazon now.

The Gabryll Ergonomic Mesh Office Chair is one of the best-selling home office chairs on Amazon. It provides ergo support on your head, back, hips, and hands. You can also easily adjust the chair height, backrest, headrest, and armrest for your optimal seating position.

The ComHomia Office Chair is a classic home office chair with a modern design. It provides all the ergonomic support you need from nine to five. This chair also features a thick padded seat with a pneumatic seat adjustment and a tilt lock function for more comfortable sitting.

If you want to make a bold statement in your home office, the Flash Furniture High Back Office Chair will do just that. This home office chair not only gives you ergonomic support but also makes you feel like a badass boss with its faux leather and metal frame.

The Dragonn Ergonomic Kneeling Chair is a functional chair that won’t leave hunching over your desk. It’s designed to give you the posture benefits of standing while in the comfort of sitting. This ergonomic stool has a sturdy metal base that can support up to 250 lbs.

The Hbada Ergonomic Office Recliner Chair is a practical office chair that checks all the boxes in a great home office chair. Ergonomic support, breathable mesh seat, adjustable height, and durability. You can never go wrong with adding this recliner chair to your work-from-home setup.

An Office Desk

Another home office essential is an office desk. There’s no doubt a simple table can serve its purpose of being a desk, but an office desk is so much more than a flat surface. It’s something that will house all your work needs when you need it at an arm’s length.

An office desk is both the command center and the battle station in your home office. So, it’s a must to have one that is sturdy, roomy, and above all in style—your style, at least. It’s your battle station—you’re the captain now.

There are different types of office desks you can choose from. You just have to make sure that it holds all the basics. Here are our top picks from Amazon.

The Cubi Cubi Study Desk is a standard home office desk you can definitely lean on. It’s sturdy and even has some bag hooks on the side for extra storage.

The Bestier L-Shaped Desk is best for corner home offices. If you have many desk accessories, don’t worry about not having enough desk space, because that won’t be a problem for this home office desk.

According to Forbes, the Uplift Desk V2 Standing Desk is the best standing desk that can keep you on the move throughout your shift. You can adjust this standing desk from 25.5″ to 51.1″.

If you have limited space in your home office, the Furinno Efficient Home Desk will still be able to give you enough space with its built-in shelves at the bottom.

Monitor and Laptop Stand

A monitor and a laptop stand are also high up on our home office essentials list. Have you seen the countless pictures of people hunched over their laptops, looking like they’re ready to ring the bells of Notre Dame? Well, you can avoid that by getting yourself a solid monitor or laptop stand.

A lot of shoulder and neck pain derives from hunching back to meet your screen. It’s one of the many avoidable aches you get from your lack of a monitor or laptop stand. A stand brings your screen up to your eye level and keeps your back and neck in an upright position. People often overlook simple ergonomics, but it’s the little things we do every day that factors in our health in the long run.

Another perk of using a monitor and laptop stand is providing your machine with proper ventilation. With its elevated position, the airflow below is no longer restricted and will keep your computer from overheating.

Here are four of the best laptop and motor stands you can get any time at Amazon.

The Loryergo Monitor Stand can provide you with the ideal viewing height of 5.5 inches to keep your monitor at eye level.

The SimpleHouseware Metal Riser can help reduce neck and eye strain by raising your monitor or laptop at a comfortable height. This monitor stand also features a metal drawer and compartments on both ends to keep your desk essentials at arm’s length.

The Roost Laptop Stand is your remote work buddy if you’re always on the go. It can fit and support all kinds of laptops and still provide you with the optimal height for ergonomic support.

The Soundance Laptop Stand can provide proper elevation for laptops 10 inches to 15.6 inches in size. It can also help to keep your laptop from overheating with its open base that doesn’t obstruct any airflow.

Keyboard and Mouse

Whether you’re on your laptop or desktop computer, you’re going to need a keyboard and mouse. Yes, even you, laptop users! Aside from its unusable trackpad, using the laptop keyboard and mouse is not sustainable. This can cause future problems on your wrists and posture too.

As mentioned, simple ergonomics can make or break your chances of having chronic pain in the long run. Investing in a good keyboard and mouse is a home office essential. It makes typing and overall computer experience easier and more fun, too. You can customize keyboard keys and get different shapes and colors of your mouse.

However, before you go crazy with the designs, you must consider their ergonomic properties and other functionalities. There are many different mice and keyboards available, with different uses, with some wireless and some traditional.

Here are the top picks from Amazon.

The Razer Pro Type Wireless Mechanical Keyboard is a great home office addition. Type effortlessly and even feel compelled to work more with its soft and satisfying mechanical keyboard.

Now, the Logitech Ergo K860 Keyboard may not look like your typical keyboard, but your wrists will thank you in the long run for getting it. You can type more naturally with its curved and split keyframe. It features a pillowed wrist rest that can provide you with 54% more support.

You can choose from three cool neutral colors to match your home office theme with the Microsoft Surface Mouse. It’s portable, light, and ergonomic! The Microsoft Surface Mouse can give you precise mouse navigation without the hassle of cords.

If you decide on getting the Razer keyboard, go ahead and add the Razer Pro Click mouse to your cart. It features an ergonomic form factor that can give your wrists comfort throughout the day.

Speakers and Headphones

Speakers and headphones are home office essentials to ward off distractions that keep you from your productivity streak. Speakers for your motivational work mix and trusty headphones to cancel out the noise from your smart appliances and not-so-smart household members.

Speakers are not just for parties and blasting music anymore. These days, music has evolved into more than just for recreational hearing. That’s why audio externals are essential. Because to stay in the zone, you have to get in the zone. Speakers and headphones can eliminate the external noise that doesn’t have any business in your workspace and keep you on top of your Google Meet and Zoom calls.

Check out our top picks for speakers and headphones on Amazon.

The Klipsch R-51PM Powered Bluetooth Speaker is a gold-standard Bluetooth speaker that can provide you with clear and surround sound. It features a cool and sleek design, too.

The Creative Pebble Plus is another great addition to complete your home office. It features an improved design that can give you a stronger bass performance and fill your room with clear audio.

The Bose Noise Cancelling Headphones 7000 is definitely worth its price. This home office essential has 11 different levels of active noise canceling, letting you enjoy your podcasts and keep you focused on your meetings.

The Anker Soundcore Life Q20 is one of the best noise-canceling headphones available now. It’s has been tried and tested to reduce background noise up to 90%.

Power Surge Protector

You’ll be surprised once you set up your home office; you will realize how you occupied all the power sockets in your space. Well, having a power surge protector can remedy that easily.

In any office, including at home, a power surge protector is essential. There are many different power surge protectors available now, with some including a USB port that can be very handy for smart appliances in your home office. You can put it anywhere there is free space, the floor or even the wall.

Here are the best power surge protectors on Amazon now.

The Kasa HS300 Smart WiFi Power Strip features six smart outlets that can let you monitor its power consumption. This home office power strip also has three USB chargers to provide you with more power supply for your home office gadgets and appliances.

The APC Essential SurgeArrest PE76 Surge Protector is a no-frills power surge that can give a ready power supply for your whole home office.

The Nekteck Power Strip Surge Protector can be your power charging station as it features 12 outlets with two smart IC USB ports.

File Cabinets and Organizers

Even with everything going electric and paperless, a file cabinet is still a home office essential. It’s always best to keep hard copies of important documents, and a file cabinet can keep them safe as long as you want.

Organizers and file cabinets will keep your home office tidy and well kept. Papers, pens, books, and other office supplies can easily take over your workspace that could curb your productivity in the wrong direction. So, make use of a simple organizer and file cabinet to keep them in their place.

Here are our top picks for file cabinets and organizers available on Amazon.

Keep your files safe and organized with the Devaise Three-Drawer Mobile File Cabinet with Lock. It features a compact and mobile metal body that can fit under most desks.

Your home office can get a whole lot cuter with the Intergreat Locking 3-Drawer Filing Cabinet while giving you a safe place to store your important files. This home office must-have can stand the test of time with its eco-friendly coat that makes it immune to corrosion and rust.

The Simple Houseware Mesh Desk Organizer can save you time from finding all your home office needs because it can fit all your stationery and home office supplies.

The Daltack 4-Tray Desktop File Organizer can keep your desk clean and tidy with its durable mesh drawer and shelves. It’s big enough to house your folders, calculators, pens, and many more.

Adequate Lighting

Lighting is everything. It can set the tone for how you want to start your day or night in your home office as you work. Also, with adequate lighting, you could see better so—let there be light!

You might think your screen light and room lighting might be enough to illuminate your home office, but it actually does more harm than good. Improperly lit workstations can cause eye strain and headaches, which is the last thing you want after a hard day’s work. So, turn down your screen brightness and turn on a desk lamp. It will give you better lighting that’s essential to boost your productivity.

Check out our top three lighting picks on Amazon.

If you want adequate lighting, you can never go wrong with choosing the BenQ e-Reading Desk Lamp. It features an auto-dimming mode while producing no glare to protect you from eye strain.

The OttLite Infuse LED Desk Lamp with Wireless Charging is a multi-purpose lamp that can give your proper illumination in your home office and can also charge your mobile at the same time.

The Lepower Metal Desk Lamp is a heavy-duty home office lamp that does the job and still fits your aesthetic. You can have it stand on your desk or clip it onto shelves for better lighting.

Desk Accessories

Desk accessories are also home office essentials. Your desk accessories will make your home office your own. Here is where you can personalize more; you can put family photos on display, small green plants, or a motivational poster that says, “Hang in there!” You can add anything!

But, do note that this is still an office, and your desk accessories must be functional, too.

Here are some of the best Amazon desk accessories you add to your home office setup.

Put some inspiration on display with the Umbra Gala Photo Display. This home office accessory combines two functions—a photo collage and a pen holder.

Having a desk leather pad can always up the ante on your home office setup. Aside from being stylish, it can also be used in multiple ways, like a mouse pad or a writing pad. Plus, it’s waterproof and easy to clean.

These Mkono Artificial Succulents deserve a place in your home office. Not only is it pretty to look at, but it can also give a calming effect when you look at the mess that is—your desk.

Office Stationery

It’s not an office without office stationery. Notepads, pens, calendars, paper clips, and all the neon-colored sticky notes you can have are home office essentials.

Office stationery can help you organize your files and note important messages and reminders, which you’ll do the most in your office. There is various stationery out there, from your basic neon to marbled prints and even your face as the background.

Here are the best three office stationery you can add to your cart on Amazon.

The Multibey Marble Stationery Set features a ballpoint pen, a mini journal, two notepads, two-wire binders, five mini wire binders, and 75 paper clips. That’s practically everything you need in terms of office stationery. Plus, you can choose from six gorgeous designs that can fit whatever aesthetic you’re going for in your home office.

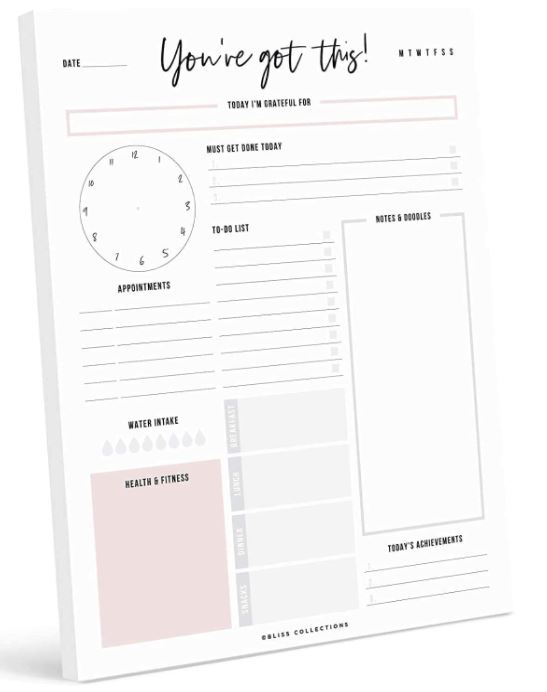

The Bliss Collections Daily Planner is something you’ll want in your home office if you want your stationery with more personality. It features a motivational design that can keep you on top of your daily tasks.

Get this set of sticky notes for only $9.27! It has all the different sticky notes you’ll ever use in different colors, no less.

How to Organize Your Home Office

Designate Your Work Zones

Your home office can be more organized if you designate work zones. If your job entails many things to do, it can be overwhelming when things are in just one place. Having a specific place for everything can help you improve your efficiency in your task because there will be fewer distractions.

Set Up an Organizing and Filing System

Having a filing system is essential to an organized home office. You don’t have to make an extensive system, but something that can help you easily navigate through the warzone that is your home office.

Customize a Working Calendar

A working calendar does more than keep you on track on the days of the month. It’s essential to keep you on schedule on different deadlines and tasks. Getting a customized working calendar can make all the difference in your home office dynamic and make you feel organized.

Keep Your Workspace Clean and Tidy

Of course, just like any part of your house, you need to keep your home office clean. Having a neat workspace will make or break your productivity and efficiency throughout your workday. So, it’s also considered a home office essential to have a cute trash bin beside your desk where you can put all your garbage or simply put things back where they belong after you use it.

Put Inspiration on Display

Organizing your home office doesn’t stop in the physical space. You also have to organize your mind when you work. And putting inspiration on display can help you clear your mind or motivate you to focus on the task at hand.

It can be a motivational poster, a picture of your dream vacation destination, or your family, whatever gives you drive.

Let Natural Light In

Getting as much natural light as you can is definitely a home office essential. Staying indoors and not getting enough sunlight can put a toll on your productivity and concentration. Based on research from Harvard, natural light can reduce fatigue and headaches by up to 63 percent.

Pros and Cons of Working from Home

What We Like About Working from Home

- There is no commute expense and time.

- Improved work-life balance.

- More flexibility in working hours.

- You’ll be able to spend more time with the family.

- Work absences will be reduced.

- Your technical and communication skills will improve.

What We Don’t Like About Working from Home

- You need a lot of self-discipline to keep yourself from distractions.

- The line between leisure and work might grow thinner.

- Miscommunication is unavoidable due to electronic glitches or internet interruptions.

- You lose living space in your house for a home office.

- It can be lonely.

- Relationships with colleagues and family members might be harder to form.

- Increased bills due to home office equipment.

Final Thoughts

During these times, you might have to accept that you’ll be working from home longer. It might be hard navigating at first, but you got through a year—you can do one more or another!

Equipping yourself with these home office essentials will make the new normal easier and maybe help you get that promotion in the comfort of your own home.

Whatever happens, it’s still business as usual at the end of the day. So, we hope this home office essentials checklist will help you check off your career growth too. But, remember you are still in your home, so make sure that you clock out everything work-related when you leave your home office too.