OpenAI, Elon Musk’s artificial intelligence laboratory in Sand Francisco, introduced Dactyl in 2018. The robot hands tagged as the “spinner” has five fingers just like the human hands. In 2019, the Technological University of Munich in Berlin introduced H-1: a robot with sensors like human skin. Each day, we are getting closer to the future where robots live with but not replace humans.

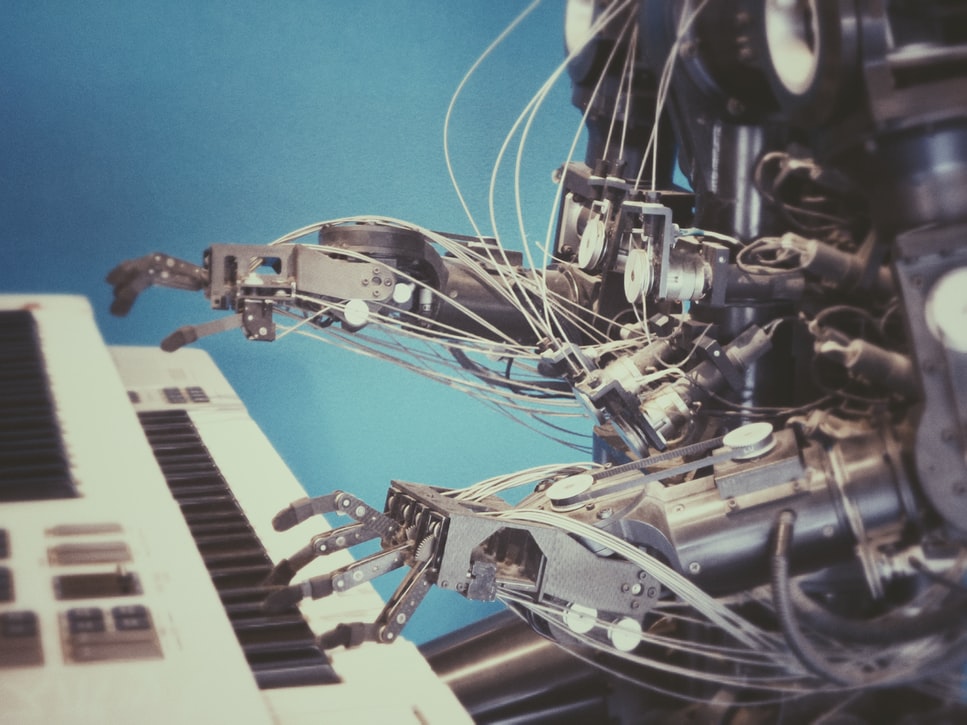

Robot Hands

Sensors are not unusual. We have available sensors in the market mass-produced for different uses. There is a heat sensor, a sound sensor, and even a pressure sensor. All these exist to serve a purpose and to help humans in our day-to-day lives. But when we dream of a future where robots are most likely to be our friends than mere assistants at home or the office, we work on creating robots that are smart enough to simultaneously use different types of sensors.

Robot hands are the first of the many in the industry to do this.

The University of California created robot hands that can do the most basic thing that human hands can: to grip. The Dex-Net 2.0 has a parallel-jaw gripper that is run by a system that can recognize learned objects. This robot can not only grip but also group the objects it knows of like a screw or pliers.

The Dex-Net 3.0 came after the Dex-Net 2.0. This newer version has a vacuum-based suction cup and instead of gripping objects, the robot picks up objects and groups them together. Dex-Net 3.0 can recognize and pick objects up even when it has not learned about them yet.

From this simpler technology came the OpenAI’s Dactyl. Dactyl is robot hands that mimic human hands. This robot has five fingers that can sense a cube, the different colors on each side of the cube, and the corresponding letters printed on each side of the colored cube. Dactyl twists the cube between its fingers and identifies a certain side when told to do so. This robot is smart enough to show you the yellow side of the cube with the letter E printed on it.

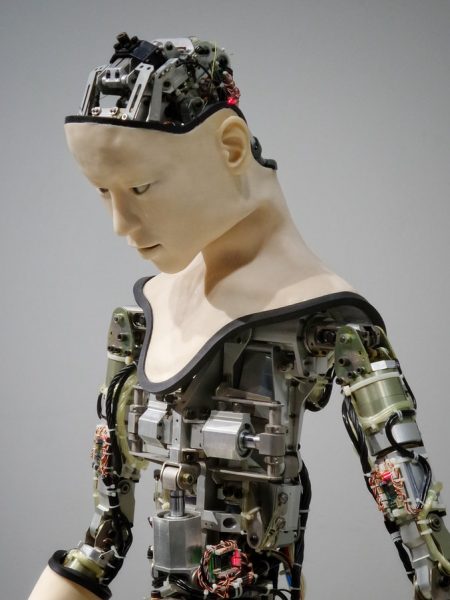

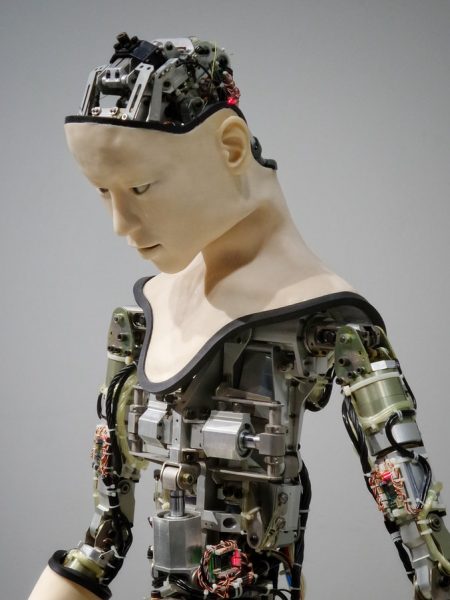

Robots With Human Skin

2019 is a breakthrough year for robotics. The University of California has successfully combined the Dex-Net 2.0 and 3.0 for an ambidextrous robot hand – a step closer to having two working hands that resemble human hands. On top of this, the University of Munich developed H-1: a robot with feelings.

H-1 can feel through the 1-inch hexagonal artificial skin attached to its body. This robotic technology tries to mimic how the human brain interprets signals. Former technology constantly computed all the things that robots sensed. This is equivalent to a person having a breakdown because his brain is overwhelmed with too much information.

The H-1 only computes information when its senses are activated. When a human touch parts of its 1,260 hexagonal skins, the H-1 senses that it is a human being and only computes this data during the interaction.

This technology is still far from being close to the 5 million receptors that humans have but it is big progress. In the future, robots can do more than grip and pick things up. Robot hands combined with a full-on human skin can care for the sick and give free hugs too.