Robotics

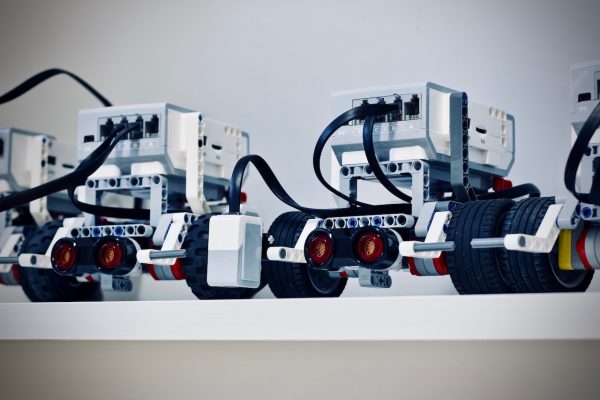

Fully functional robots are not just alive in different forms of fiction. We now have robots that serve on the frontlines, imitate animals, act on their own, and carry out basic human tasks. You should not be wary of these advancements but rather be curious about how else they can be beneficial to us in the future. Robots.net will be your guide as we journey to the world of robotics, showing you how it evolves every day. From features and lists, we have materials that will satisfy your curiosity.

![How To Make A Robot: Ultimate Guide [Updated 2020]](https://robots.net/wp-content/uploads/2019/10/Robot_Engines-600x487.jpg)